How to Remove Your Data from ChatGPT: Reputation Management in the Era of AI

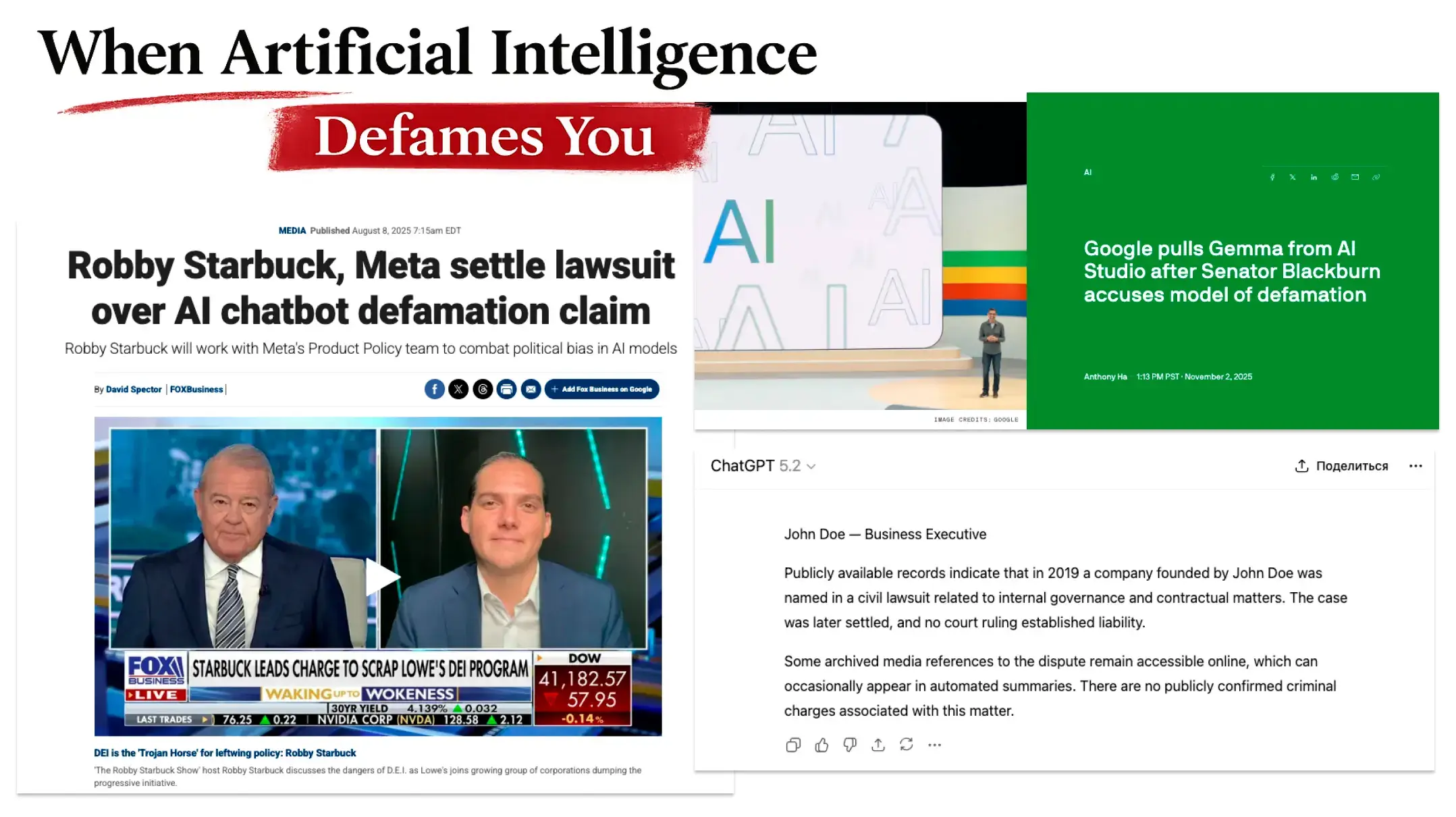

Over the past few years, I’ve seen a steady growth in the number of cases directly linked to one of the hottest digital trends: large language models (LLMs). I’ve seen clients fail bank compliance checks, executives get blocked from partnerships, and candidates get declined by hiring managers based on biased AI assessments.

Throughout history, those who controlled information channels held enormous power. In the 1930s, radio transformed from a novelty into the dominant medium for shaping public opinion. Today, AI systems like ChatGPT represent a similar shift. They synthesize, prioritize, and often distort information. And while radio broadcasts are obvious, AI can influence decisions in more subtle ways.

In this article, I will explain why monitoring how AI models describe you is important and provide guidance on what to do if their responses contain private details or negativity.

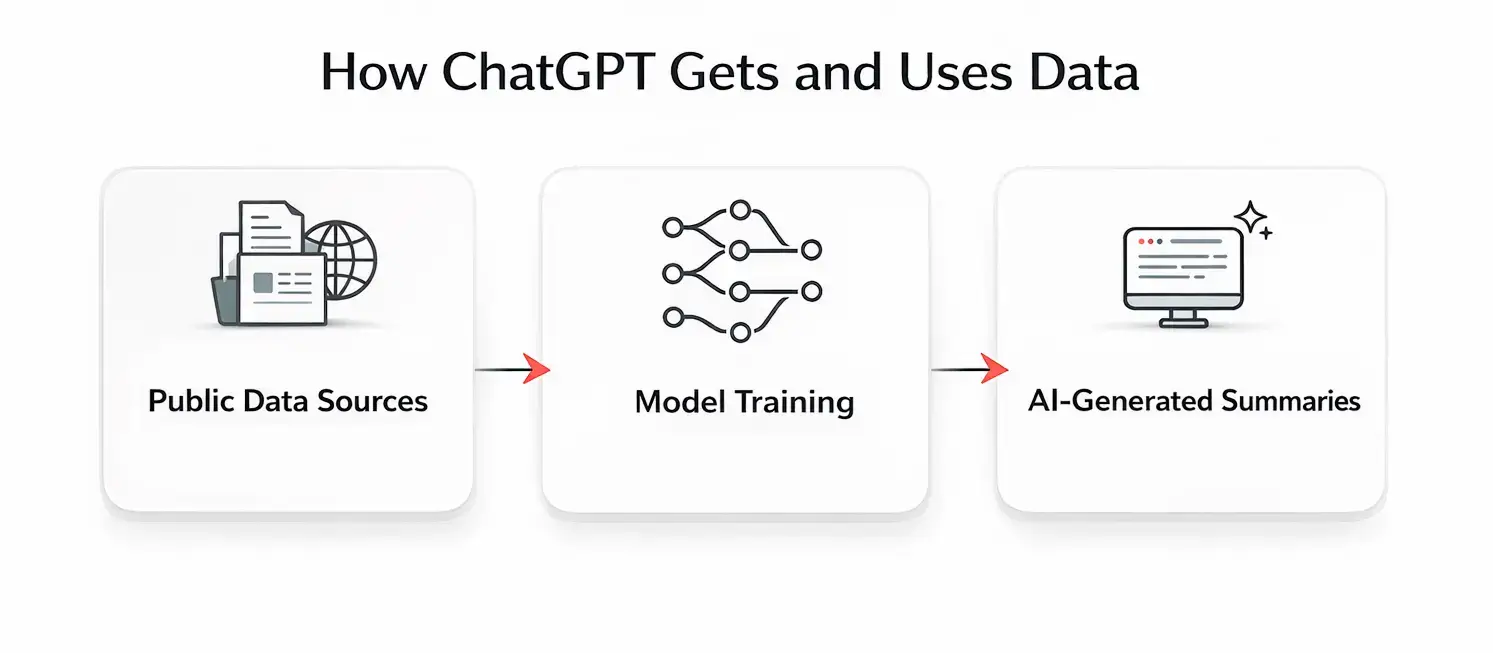

Where does ChatGPT get its information from?

Understanding how to remove your information from ChatGPT begins with understanding where it originates.

Large language models, such as ChatGPT, Claude, or Gemini, learn from massive amounts of data: web pages, news articles, books, specialized forums, academic papers, and even social media posts. The majority of these systems do not browse the internet in real-time when you ask them a question. Instead, they rely on information learned during training cycles that happened weeks or months ago.

Also, the age of data matters. A source that has been in the database for more than two years has significantly more credibility with the model than three fresh links that appeared a month ago.

For example, when it was released, the GPT-4o model knew everything up to October 2023, and then utilized retrieval and Browse-with-Bing features explicitly activated by the user. The model didn’t check whether the source still exists at the moment of a conversation. Once it is trained, it won’t automatically ‘forget’ removed webpages.

Instead of indexing everything equally, AI ranks sources by “weight”, which is a variable measure:

- For complex niches (finance, medicine, law): LLMs prioritize expert resources, such as Bloomberg, government portals, and industry-specific publications.

- For consumer products and personal branding, behavioral factors come into play. If there’s a lively discussion about sneakers on a fashion forum, this carries more weight than a dry article in traditional media. AI looks for “collective experience.”

AI aims to be neutral

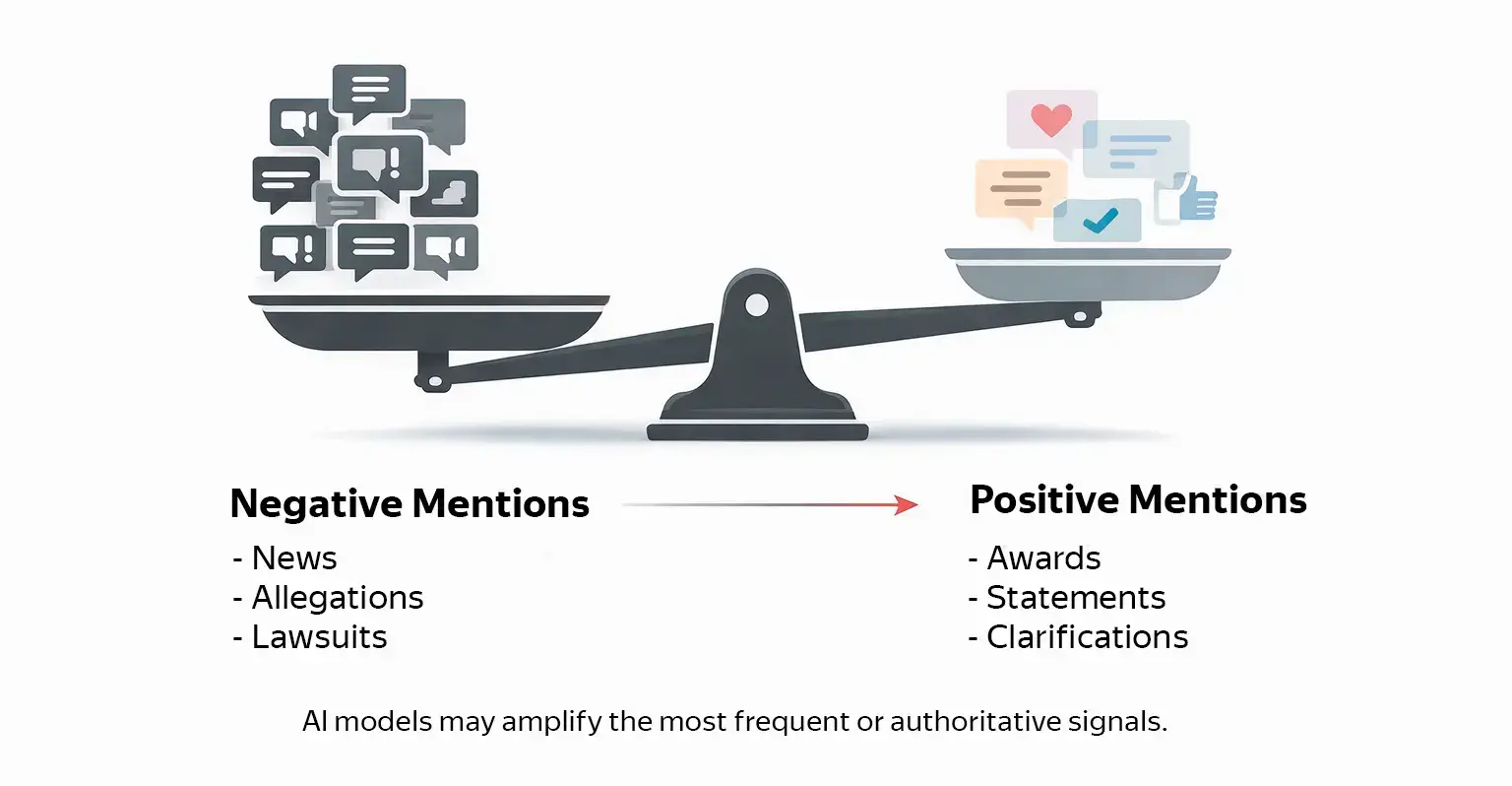

LLMs are designed to sound neutral, but their outputs reflect the “balance of weights” in their training data. If the virtual “scales” tip in one direction, neutral synthesis produces skewed results.

How AI weighs information

To put it simply, ChatGPT and other LLMs assign “points” to pieces of information based on how often they appear, where they appear, and how authoritative those sources are.

For instance, if 10 sources say something negative about you, that creates 10 “negative points,” while only 3 positive sources give you just 3 “positive points.” The AI’s response will reflect this imbalance. To change it, we have to add positive and neutral signals, not just remove negative ones.

Key takeaways

- Models rely primarily on pre-trained data that may be months or years old unless a browsing/retrieval feature is enabled.

- LLMs strive to be neutral, but their outputs reflect the weight of signals in the training database.

- AI gives “points” to information based on frequency and source authority. Creating positive and neutral content should go hand in hand with removing negative signals.

How to prevent bad information about you from appearing in AI

Here is a step-by-step guide for maintaining a stellar reputation according to LLMs’ responses.

Monitor AI outputs regularly

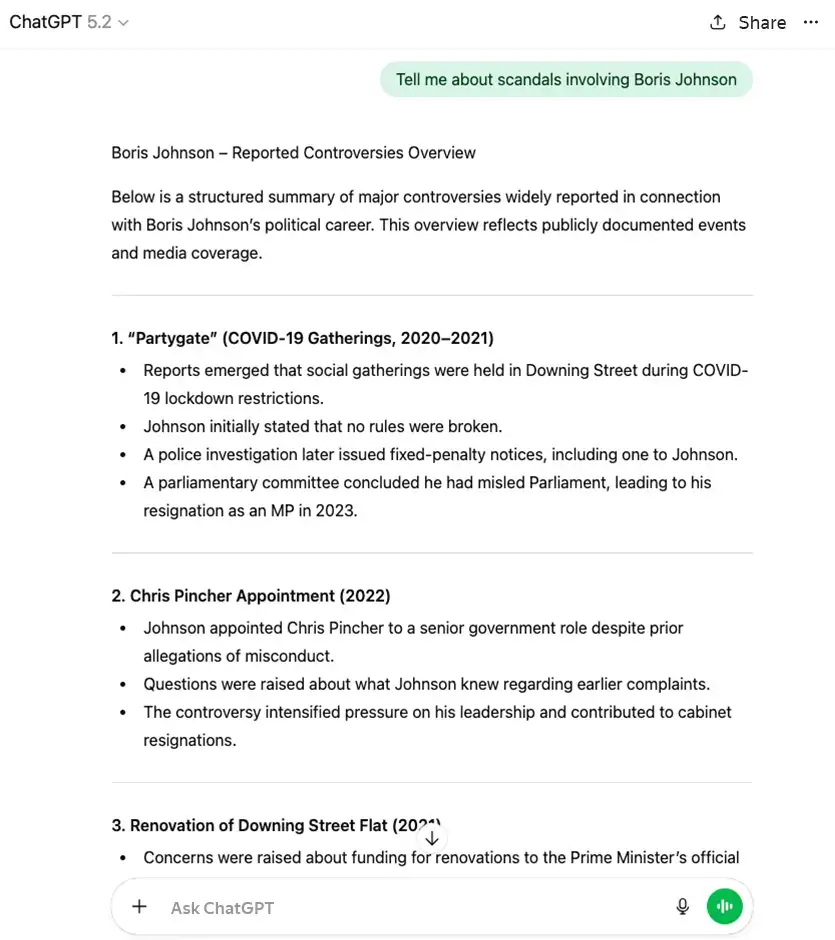

Set up a monitoring routine that covers ChatGPT (multiple versions if possible), Microsoft Copilot (formerly Bing Chat), Claude, Perplexity, and Gemini. Use consistent prompts and screenshot their answers, including the model name and timestamps.

- “Who is [Full name]?”

- “What controversies involve [Company name]?”

- “What should I know before doing business with [Name]?”

- “Tell me about [Name]’s reputation.”

React immediately to negativity

If the monitoring shows any negative content, act fast, so that you can prevent it from entering the next training cycle and minimize its weight before the next model update.

Focus on balance

Trying to delete everything negative is rarely effective. It is better to identify the weight of negative content and then create a matching positive weight by publishing neutral and positive content on equal or higher-authority websites. In our practice, widely cited, high-authority sources like major news outlets, reference sites, and academic institutions tend to influence how models summarize topics more.

What works

Effective approaches focus on building rather than just removing: publishing counter-content on authoritative domains, getting corrections and editor’s notes added to negative sources, and establishing presence on sites AI systems trust. Submitting formal privacy requests with proper documentation also works, but only when paired with a broader content strategy.

Sometimes removal is not possible. One of our cases dealt with a single negative article about a client appearing in AI-generated “profiles.” Instead of simply demanding deletion, we opened direct communication with moderators at OpenAI and Perplexity. Arguing that the source was questionable and lacked verification, we managed to get the neural network to add a disclaimer: “This information comes from an unverified source and requires additional confirmation.” This instantly reduced the level of distrust for anyone reading the AI’s response.

What doesn’t

Demanding AI companies “delete everything about me,” aggressive media outreach or legal threats without content backing them up often do more harm than good. And the worst mistake I often see is ignoring AI while focusing solely on Google, or simply waiting for problems to resolve themselves.

What to do next

- Publish counter-content on high-authority, AI-trusted domains.

- Get corrections and editor’s notes added to existing negative sources.

- Submit a formal request backed by a content strategy.

- Build counter-narratives that add context.

- Avoid aggressive suppression tactics that can generate more coverage.

Case study: AI reputation management in practice

To answer the question “What is AI reputation management?”, let me share a recent case of ours. It perfectly illustrates why traditional methods fail when dealing with machine learning models.

Our client had been repeatedly failing bank compliance checks, even though traditional due diligence reports and Google searches were coming back clean. Our research led us to the discovery that the issue was neural networks. While we had successfully removed negative articles about the client 18 months prior, the AI tools at banks still “remembered” that data. This led to their responses having all the negative details about the client, prompting failed compliance checks.

Before hiring us, the client also made the mistake of contacting the media directly, demanding the deletion info about them. The editors found this behavior newsworthy, which led to even more negative stories about these “suppression attempts”.

It was clear that standard content removal was not enough. To resolve the issue, we took the following steps

- We tested dozens of prompts to see exactly how neural networks constructed the client’s profile.

- Then we submitted formal requests to OpenAI and other platforms, supplying them with legal arguments and timestamped evidence, to ensure that the neural networks provide accurate and up-to-date information about the client.

- We also published 40+ pieces of high-quality content on authoritative domains. Instead of trying to hide the old, unflattering content, we provided enough positive and verifiable facts to tip the weight balance in LLMs’ responses.

Summing it up

- Never announce your removal efforts, as public disputes can attract additional coverage and repeat the allegation.

- Leave formal request submissions to platforms and media outlets to professionals and legal experts.

- Invest in content removal and its creation to balance the influence of the former on search and AI chat results.

Things to keep in mind

Start from accepting the fact that machine learning models do not “think” like humans or search like Google. They are probability engines, constantly weighing the available data.

Is it even possible to avoid appearing in AI models? In my experience, yes. Here are proactive measures to take.

- Keep your digital footprint clean. Be ruthless about what you leave on open-access data broker sites. If your phone number is public, it’s likely already in a training set.

- React quickly. Address negative content the moment it appears. The longer a negative article stays live, the higher the probability it will be scraped into a dataset for training.

- Release counter-content. Proactively publish positive, factual content. If the neural network finds a void, it might fill it with hallucinations.

- Regular monitoring of popular LLMs’ responses helps to know what the AI is saying about you or your business before somebody else does.

Managing signal balance

Increase the volume of trustworthy content. In our work, we focus on high-authority domains (like universities or industry news) because OpenAI tends to favor these sources. We also document errors and conflicts of interest in negative source material. Over time, if corrections and higher-quality sources dominate the dataset, AI summaries may shift.

Make sure to maintain factual consistency. For example, if your LinkedIn profile says one thing and Crunchbase profile says another, the AI gets confused and reduces trust in your preferred narrative.

Making AI distrust negative sources

By documenting factual errors and bias and offering counter-evidence, models often hedge or qualify claims. Instead of stating “John Doe is a fraudster,” the AI might say: “Some sources suggest allegations were made, though these reports have been disputed and contain factual inconsistencies.”

AI models have a “temperature” setting that controls creativity. When there is conflicting data (e.g., a negative article vs. a strong correction), the model often chooses the “safest” path to avoid hallucinating. By introducing a formal correction into the data ecosystem, we can force the AI into a neutral stance because it lowers the statistical confidence of the negative claim.

Focusing on unique ‘good’ content

The most reliable way to “re-educate” AI is to create unique content. Not just duplicate existing information, but generate new meanings and points of view. If a user asks about your brand, receives an answer drawn from your positive content, and doesn’t follow up with clarifying questions, the algorithm considers that response “balanced.” The goal is to provide high-quality, fresh material so that there is no need to dig into questionable negative information from deeper layers of the dataset.

Key takeaways

- AI models function as probability engines that weigh data patterns rather than “thinking” like humans.

- Proactive digital footprint management is essential: address negative content immediately; regularly check responses from popular LLMs; focus on publishing through domains with high “trust weights”.

- Shift the narrative by tipping the balance toward positive sentiment and making negative content appear less trustworthy through context and nuanced formulations.

Why AI reputation management matters

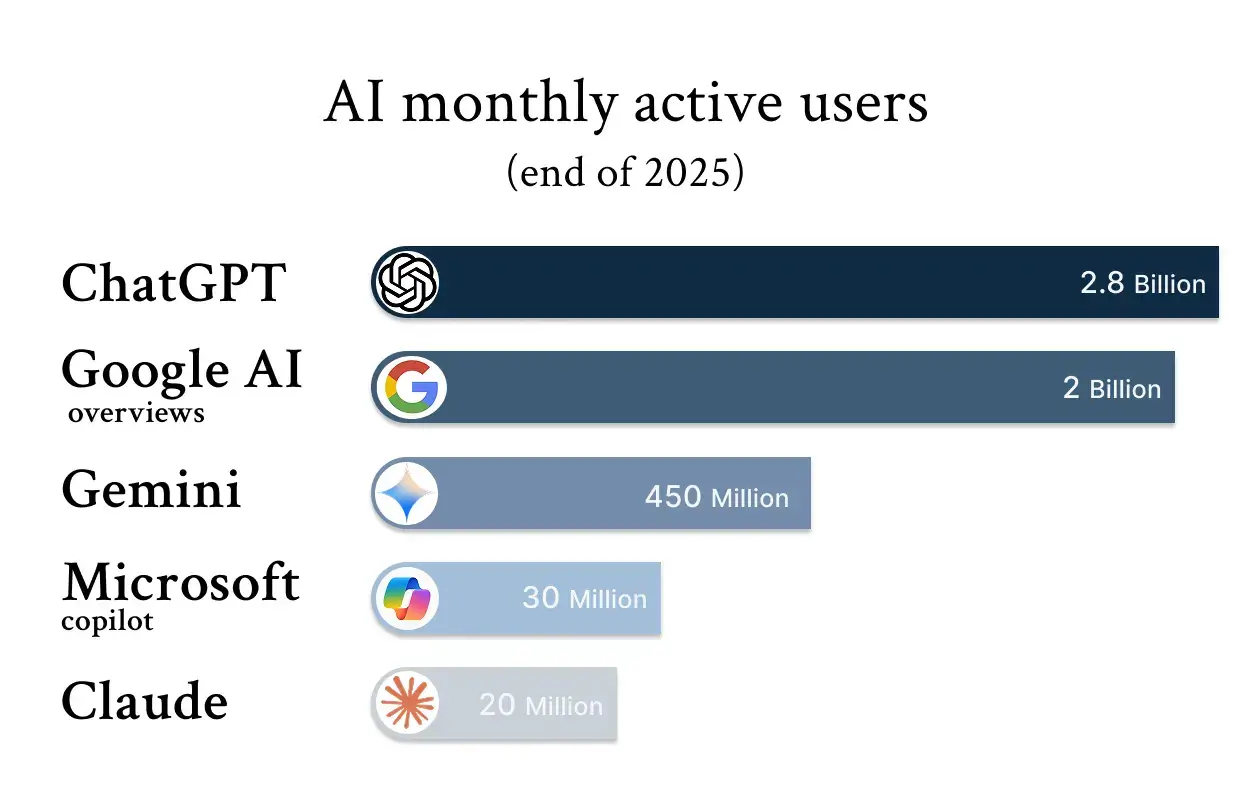

The sheer size of their user base and how AI agents are getting integrated everywhere makes focusing entirely on Google search results an outdated approach.

Audience size. ChatGPT alone has over 800 million weekly active users. Add Claude, Gemini, Perplexity, and Microsoft Copilot, and the reach expands further. Among these users are hiring managers evaluating candidates, investors researching potential partners, bank employees conducting background checks, journalists gathering background for stories, and so on.

Accelerating integration. AI tools are being integrated everywhere. From AI overviews in search results to emails suggesting AI-generated responses. People can encounter AI information about you even when they did not specifically ask for it.

Early adopters win. Managing your AI presence gives more chances to shape the narrative before another (sometimes negative) point of view becomes dominant in the LLMs’ dataset.

What to do if your private info was fed to ChatGPT

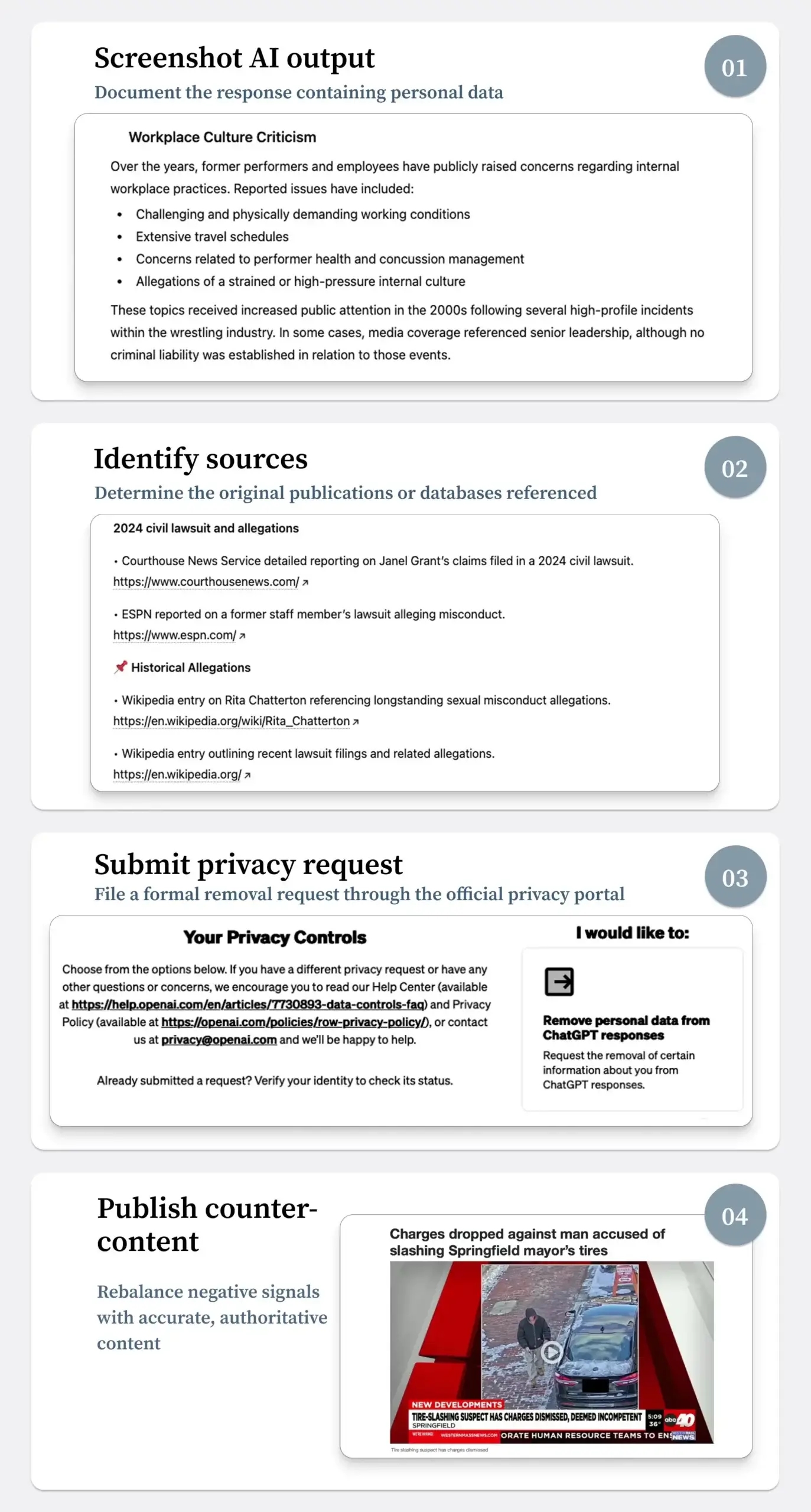

It is not always possible to prevent ChatGPT from learning a person’s private details. If AI produces responses that contain your private data, here is a step-by-step guide on how to handle it.

-

- Document everything. Before doing anything else, grab screenshots that clearly show the date, time, your exact prompt, and the AI’s full response containing the personal data.

- Identify the source. Often, it is an old Facebook post, old blog comments, yellow-pages-style business listings, or public records that never should have been public. Once found, contact that site directly to request a takedown to stop the same data from getting scraped into future training.

- Submit a formal privacy request to OpenAI. Send it via the official privacy center for data subject requests. Ensure that your request includes:

- full legal name and contact information;

- the exact prompts that generate your private info;

- screenshots documenting the outputs;

- specific identification of the content you want removed;

- legal basis for your request.

- Follow up and escalate if needed. If you don’t receive an adequate response within 30 days, follow up referencing your original case. If that doesn’t help, escalate the matter to regulators like your local Data Protection Authority or State Attorney General. At this stage, consulting legal counsel might also be necessary to explore further options for enforcing your rights.

Below is a template of a removal request you can adapt

To-do list

- Screenshot problematic AI outputs with timestamps.

- Identify original sources of personal data online.

- Submit removal requests to those sources first.

- Prepare a formal request for the OpenAI privacy portal with specific prompts, outputs, and legal basis.

- If nothing changes in 30 days, follow up and consider escalating the case to local regulators.

Conclusion

If you need to delete your data or negativity from ChatGPT (or any other AI platform), the most effective approach combines multiple strategies. Document AI outputs and try to fix the original sources first. Follow with formal requests to the platforms and balance signals by publishing authoritative content that gives AI models your point of view, positive and neutral content.

Think about AI reputation management as an ongoing effort. Partnering with experts such as Reputation America is more effective than trying to control things yourself. Not only do we know how to file requests for removal effectively and have expertise in creating authoritative content that shapes what AI systems learn, but we also monitor the results and reduce the likelihood that the content reappears.

FAQ

1. Document the evidence. Take screenshots of the ChatGPT response showing your personal information, including the exact prompt, date, time, and model version.

2. Remove the original source. Identify where the information comes from (such as a public website, old post, or directory) and request its deletion to prevent future AI training use.

3. Submit a formal request. File a removal request with OpenAI through its privacy portal, attaching screenshots, specifying the content to be removed, and providing legal identification.

By default, chats may be saved to your account history, and depending on your settings and plan, they may be used to improve models. For personal accounts, you can check your status in Settings > Data Controls to see exactly how your information is being handled.

You can opt out directly in the settings. Go to Data Controls and toggle off "Chat History & Training" (or "Improve the model for everyone"). This prevents your new conversations from being used to teach the AI. However, keep in mind that this doesn't scrub information the AI has already learned from the public internet.

This depends heavily on your location. If you are in the EU (GDPR) or California (CCPA/CPRA), you have strong rights to request data erasure and correction. Other regions have varying levels of protection that are constantly evolving. If you are dealing with a serious reputational threat, it is best to consult with experts familiar with digital privacy laws in your jurisdiction.

This article does not have any comments. Be first to leave one.